---

license: mit

task_categories:

- image-to-video

tags:

- video-generation

- motion-control

- point-trajectory

---

# MoveBench of Wan-Move

[](https://arxiv.org/abs/2512.08765)

[](https://github.com/ali-vilab/Wan-Move)

[](https://huggingface.co/Ruihang/Wan-Move-14B-480P)

[](https://modelscope.cn/models/churuihang/Wan-Move-14B-480P)

[](https://huggingface.co/datasets/Ruihang/MoveBench)

[](https://www.youtube.com/watch?v=_5Cy7Z2NQJQ)

[](https://wan-move.github.io/)

## MoveBench: A Comprehensive and Well-Curated Benchmark to Access Motion Control in Videos

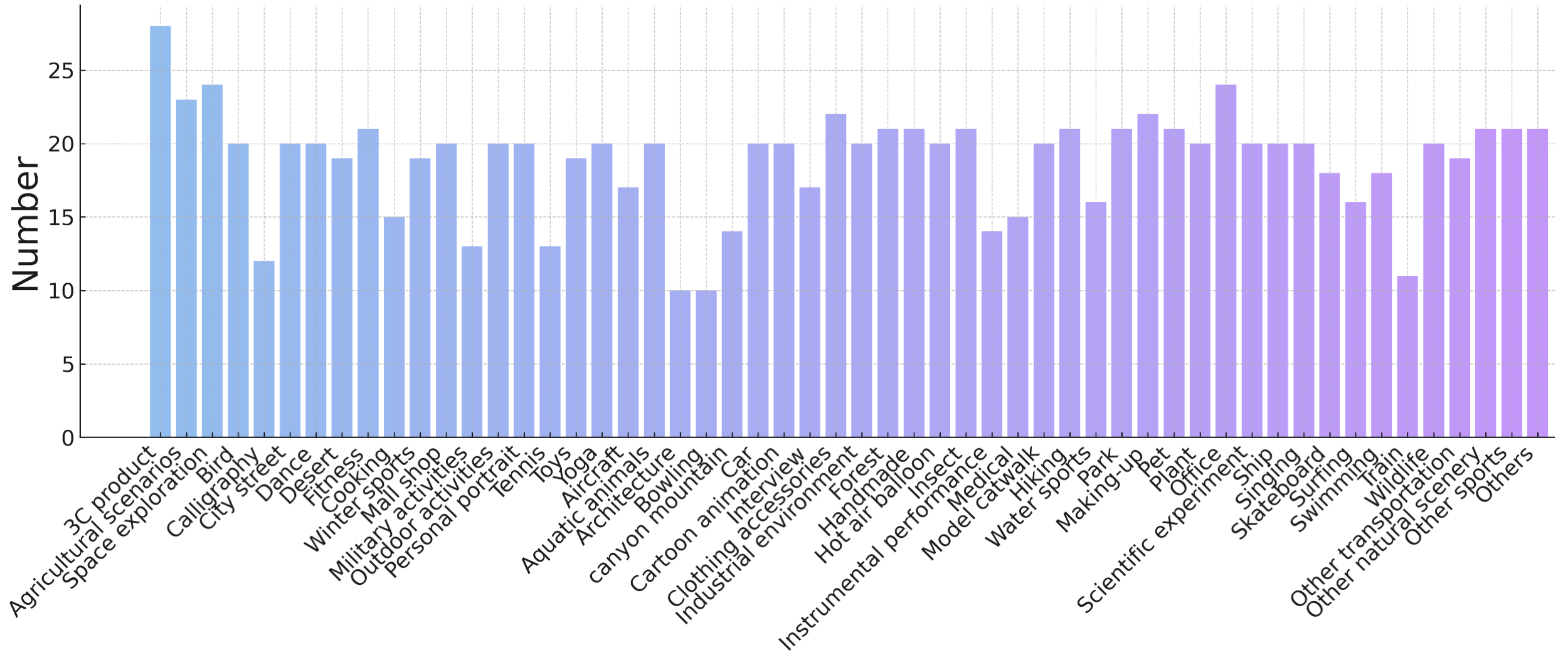

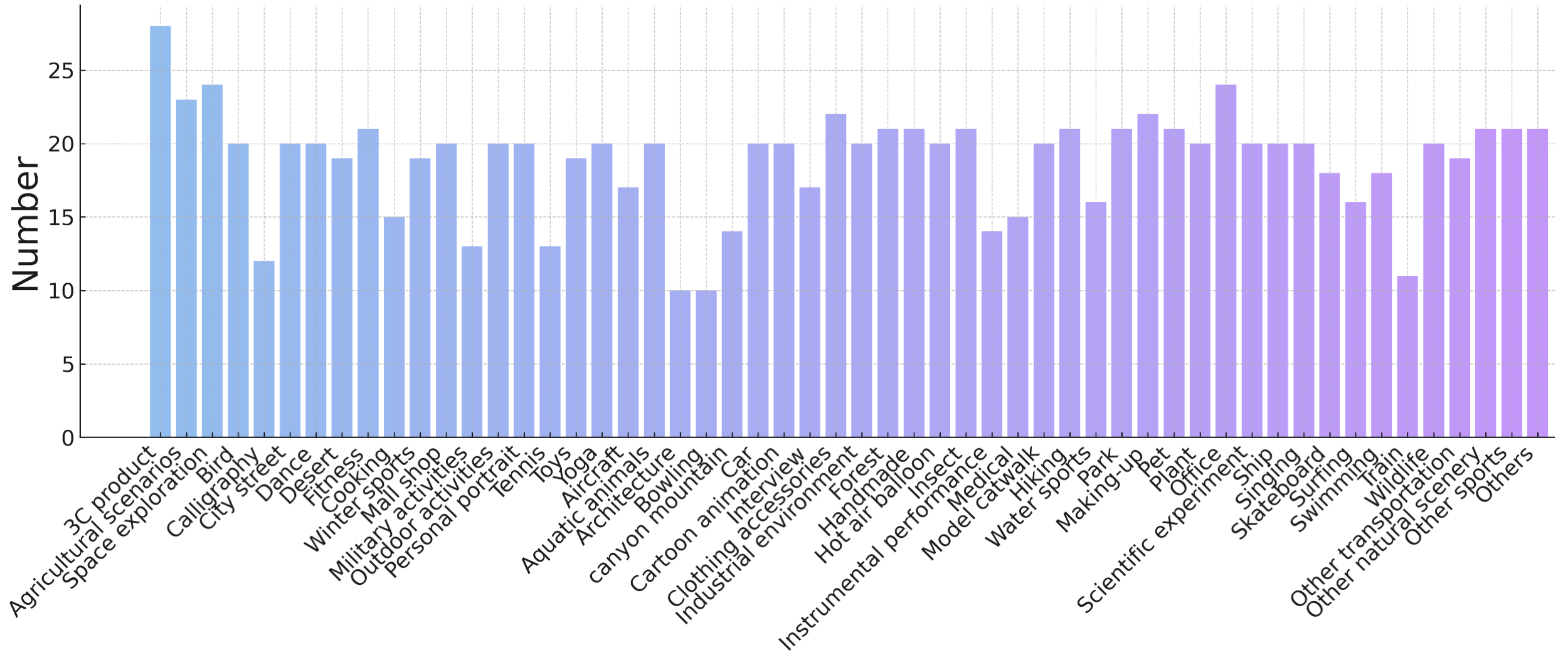

MoveBench evaluates fine-grained point-level motion control in generated videos. We categorize the video library from [Pexels](https://www.pexels.com/videos/) into 54 content categories, 10-25 videos each, giving rise to 1018 cases to ensure a broad scenario coverage. All video clips maintain a 5-second duration to facilitate evaluation of long-range dynamics. Every clip is paired with detailed motion annotations for a single object. Addtional 192 clips have motion annotations for multiple objects.

To annotate, annotators click on a target region in the first frame, prompting SAM to generate an initial mask. When the mask exceeds the desired area, annotators add negative points to exclude irrelevant regions. After annotation, each video contains at least one point trajectory indicating a representative motion, with 192 videos additionally including multiple-object motion trajectories.

Welcome everyone to use it!

## Statistics

The contruction pipeline of MoveBench

The contruction pipeline of MoveBench

Balanced sample number per video category

Balanced sample number per video category

Comparison with related benchmarks

Comparison with related benchmarks

## Download

Download MoveBench from Hugging Face:

``` sh

huggingface-cli download Ruihang/MoveBench --local-dir ./MoveBench --repo-type dataset

```

Extract the files below:

``` sh

tar -xzvf en.tar.gz

tar -xzvf zh.tar.gz

```

The file structure will be:

```

MoveBench

├── en # English version

│ ├── single_track.txt

│ ├── multi_track.txt

│ ├── first_frame

│ │ ├── Pexels_videoid_0.jpg

│ │ ├── Pexels_videoid_1.jpg

│ │ ├── ...

│ ├── first_frame_mask

│ │ ├── single

│ │ │ ├── Pexels_videoid_0_mask_idx.png

│ │ │ ├── Pexels_videoid_1_mask_idx.png

│ │ │ ├── ...

│ │ ├── multi

│ │ │ ├── Pexels_videoid_0_mask_idx.png

│ │ │ ├── Pexels_videoid_1_mask_idx.png

│ │ │ ├── ...

│ ├── video

│ │ ├── Pexels_videoid_0.mp4

│ │ ├── Pexels_videoid_1.mp4

│ │ ├── ...

│ ├── track

│ │ ├── single

│ │ │ ├── Pexels_videoid_0_tracks.npy

│ │ │ ├── Pexels_videoid_0_visibility.npy

│ │ │ ├── ...

│ │ ├── multi

│ │ │ ├── Pexels_videoid_0_tracks.npy

│ │ │ ├── Pexels_videoid_0_visibility.npy

│ │ │ ├── ...

├── zh # Chinese version

│ ├── single_track.txt

│ ├── multi_track.txt

│ ├── first_frame

│ │ ├── Pexels_videoid_0.jpg

│ │ ├── Pexels_videoid_1.jpg

│ │ ├── ...

│ ├── first_frame_mask

│ │ ├── single

│ │ │ ├── Pexels_videoid_0_mask_idx.png

│ │ │ ├── Pexels_videoid_1_mask_idx.png

│ │ │ ├── ...

│ │ ├── multi

│ │ │ ├── Pexels_videoid_0_mask_idx.png

│ │ │ ├── Pexels_videoid_1_mask_idx.png

│ │ │ ├── ...

│ ├── video

│ │ ├── Pexels_videoid_0.mp4

│ │ ├── Pexels_videoid_1.mp4

│ │ ├── ...

│ ├── track

│ │ ├── single

│ │ │ ├── Pexels_videoid_0_tracks.npy

│ │ │ ├── Pexels_videoid_0_visibility.npy

│ │ │ ├── ...

│ │ ├── multi

│ │ │ ├── Pexels_videoid_0_tracks.npy

│ │ │ ├── Pexels_videoid_0_visibility.npy

│ │ │ ├── ...

├── bench.py # Evaluation script

├── utils # Evaluation code modules

```

For evaluation, please refer to [Wan-Move](https://github.com/ali-vilab/Wan-Move) code base. Enjoy it!

## Citation

If you find our work helpful, please cite us.

```

@article{chu2025wan,

title={Wan-move: Motion-controllable video generation via latent trajectory guidance},

author={Chu, Ruihang and He, Yefei and Chen, Zhekai and Zhang, Shiwei and Xu, Xiaogang and Xia, Bin and Wang, Dingdong and Yi, Hongwei and Liu, Xihui and Zhao, Hengshuang and others},

journal={arXiv preprint arXiv:2512.08765},

year={2025}

}

```

## Contact Us

If you would like to leave a message to our research teams, feel free to drop me an [Email](ruihangchu@gmail.com).  The contruction pipeline of MoveBench

The contruction pipeline of MoveBench

The contruction pipeline of MoveBench

The contruction pipeline of MoveBench

Balanced sample number per video category

Balanced sample number per video category

Comparison with related benchmarks

Comparison with related benchmarks