Text Generation

fastText

Azerbaijani

wikilangs

nlp

tokenizer

embeddings

n-gram

markov

wikipedia

feature-extraction

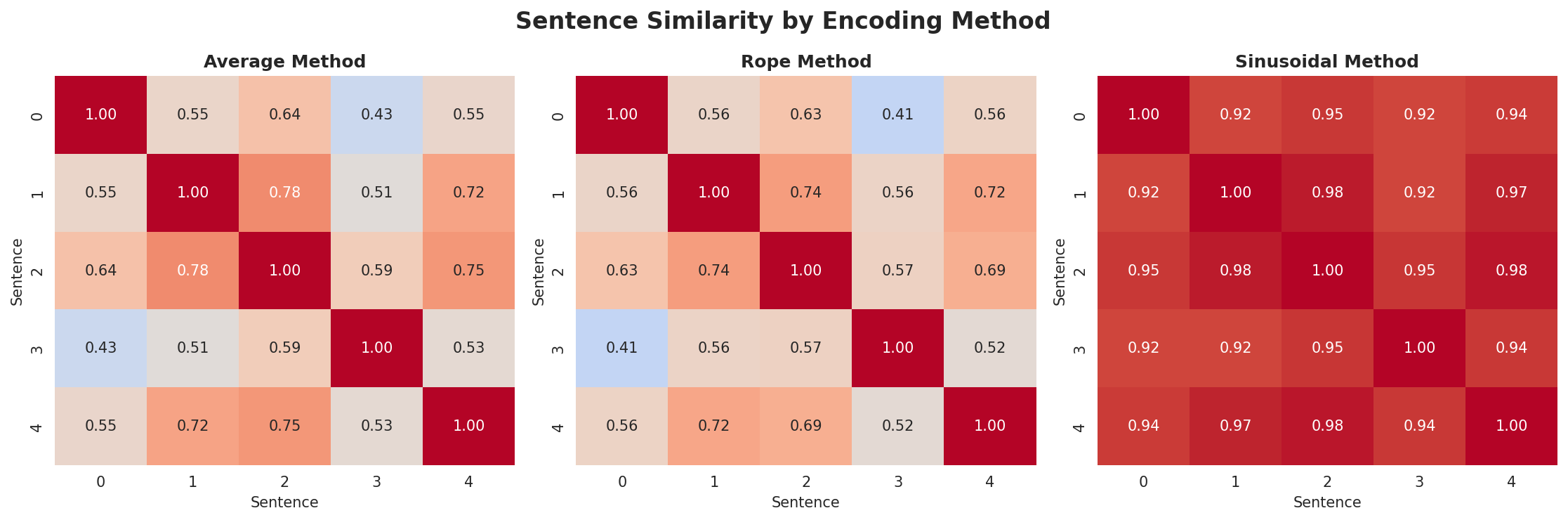

sentence-similarity

tokenization

n-grams

markov-chain

text-mining

babelvec

vocabulous

vocabulary

monolingual

family-turkic_oghuz

Instructions to use wikilangs/az with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- fastText

How to use wikilangs/az with fastText:

from huggingface_hub import hf_hub_download import fasttext model = fasttext.load_model(hf_hub_download("wikilangs/az", "model.bin")) - Notebooks

- Google Colab

- Kaggle

- Xet hash:

- c192970c77f15a61cd837081c7b423f90149b2bea9a7f2afcfbb3f6da6960c8c

- Size of remote file:

- 115 kB

- SHA256:

- 00ea307dd570d2ec6a9eb14a3de01cc583026cd51015837e2044b45a4bcb46d1

·

Xet efficiently stores Large Files inside Git, intelligently splitting files into unique chunks and accelerating uploads and downloads. More info.