AttnSleep

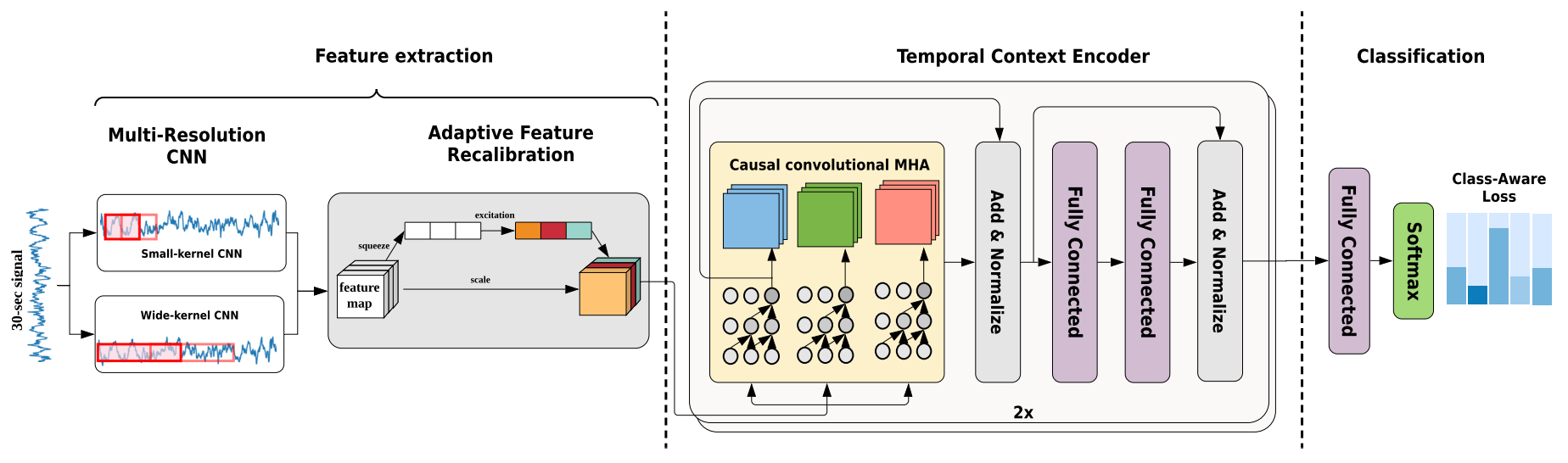

Sleep Staging Architecture from Eldele et al (2021) [Eldele2021].

Architecture-only repository. Documents the

braindecode.models.AttnSleepclass. No pretrained weights are distributed here. Instantiate the model and train it on your own data.

Quick start

pip install braindecode

from braindecode.models import AttnSleep

model = AttnSleep(

n_chans=2,

sfreq=100,

input_window_seconds=30.0,

n_outputs=5,

)

The signal-shape arguments above are illustrative defaults — adjust to match your recording.

Documentation

- Full API reference: https://braindecode.org/stable/generated/braindecode.models.AttnSleep.html

- Interactive browser (live instantiation, parameter counts): https://huggingface.co/spaces/braindecode/model-explorer

- Source on GitHub: https://github.com/braindecode/braindecode/blob/master/braindecode/models/attn_sleep.py#L18

Architecture

Parameters

| Parameter | Type | Description |

|---|---|---|

n_tce |

int | Number of TCE clones. |

d_model |

int | Input dimension for the TCE. Also the input dimension of the first FC layer in the feed forward and the output of the second FC layer in the same. Increase for higher sampling rate/signal length. It should be divisible by n_attn_heads |

d_ff |

int | Output dimension of the first FC layer in the feed forward and the input dimension of the second FC layer in the same. |

n_attn_heads |

int | Number of attention heads. It should be a factor of d_model |

drop_prob |

float | Dropout rate in the PositionWiseFeedforward layer and the TCE layers. |

after_reduced_cnn_size |

int | Number of output channels produced by the convolution in the AFR module. |

return_feats |

bool | If True, return the features, i.e. the output of the feature extractor (before the final linear layer). If False, pass the features through the final linear layer. |

n_classes |

int | Alias for n_outputs. |

input_size_s |

float | Alias for input_window_seconds. |

activation |

nn.Module, default=nn.ReLU | Activation function class to apply. Should be a PyTorch activation module class like nn.ReLU or nn.ELU. Default is nn.ReLU. |

activation_mrcnn |

nn.Module, default=nn.ReLU | Activation function class to apply in the Mask R-CNN layer. Should be a PyTorch activation module class like nn.ReLU or nn.GELU. Default is nn.GELU. |

References

- E. Eldele et al., "An Attention-Based Deep Learning Approach for Sleep Stage Classification With Single-Channel EEG," in IEEE Transactions on Neural Systems and Rehabilitation Engineering, vol. 29, pp. 809-818, 2021, doi: 10.1109/TNSRE.2021.3076234.

- https://github.com/emadeldeen24/AttnSleep

- https://sleepdata.org/datasets/shhs

Citation

Cite the original architecture paper (see References above) and braindecode:

@article{aristimunha2025braindecode,

title = {Braindecode: a deep learning library for raw electrophysiological data},

author = {Aristimunha, Bruno and others},

journal = {Zenodo},

year = {2025},

doi = {10.5281/zenodo.17699192},

}

License

BSD-3-Clause for the model code (matching braindecode). Pretraining-derived weights, if you fine-tune from a checkpoint, inherit the licence of that checkpoint and its training corpus.